Abstract

In the case of multiple nonstationary independent source signals and linear instantaneous time-varying mixing systems, it is difficult to adaptively separate the multiple source signals. Therefore, the adaptive blind source separation (BSS) problem is firstly formally expressed and compared with tradition BSS problem. Then, we propose an adaptive blind identification and separation method based on the variable learning rate equivariant adaptive source separation via independence (EASI) algorithm. Furthermore, we analyze the scope and conditions of variable-learning rate EASI algorithm. The adaptive BSS simulation results also show that the variable learning rate EASI algorithm provides better separation effect than the fixed learning rate EASI and recursive least-squares algorithms.

Highlights

- Online real-time BSS problems are formally expressed and compared to traditional BSS issues.

- This paper applies a variable learning rate EASI algorithm to the adaptive BSS problem.

- The similarity coefficient and Vestigial Quadratic Mismatch (VQM) are used as quantitative evaluation indicators of the waveform similarity of the separated source signals.

- A simulation is designed to verify the correctness of our approach. The fixed learning rate EASI and RLS algorithms are used for comparison.

1. Introduction

There is an increasing demand for dynamic systems to be safer and more reliable [1]. In mechanical fault diagnosis, the extraction of fault characteristic information is indispensable [2], although the complexity of mechanical devices means that mechanical vibration signals usually have mixed and multipath effects. Furthermore, there may be some frequency superposition in the signals, so it is challenging to extract feature information. When multiple signals are statistically independent, the technique of blind source separation (BSS) can identify the original signals through the use of sensors, regardless of the location information, signal quantity, and so on [3].

Recently, BSS has attracted considerable attention. It has been widely used in many practical applications, such as telecommunications [4], biomedical signal processing [5], vibration source enumeration and identification [6], image processing [7], voice signal classification [8], fault diagnosis [9], and structural health monitoring [10, 11]. The earliest application of BSS was in the failure analysis of a gearbox [12]. BSS can be conducted through two popular approaches, namely second-order blind identification [13] and independent component analysis (ICA) [8]. Traditional BSS methods have two main disadvantages [14]: they operate offline, and require the entire signal to perform separation and identification. The use of offline BSS methods for online problems is time-consuming and inefficient.

The first adaptive form of BSS was proposed by Jutten and Herault [15]. Cardoso and Laheld developed a different adaptive approach [16], using the ‘relative gradient’ adaptive algorithm based on serial updating, whereby the separating matrix is updated in each step when a new sample is received. These adaptive algorithms are known as equivariant adaptive source separation via independence (EASI). However, because of their constant learning rate (step-size), typical EASI algorithms face a trade-off between stability and convergence speed [17].

Methods with adaptive learning rate have been widely studied, as the variable learning rate makes the algorithm effective in non-stationary environments. Xie et al. [18] adjusted the learning rate using mutual information, and Zhang et al. [19] changed the learning rate by estimating a performance index. For time-varying mixing channels, the variable learning rate sign natural gradient algorithm was proposed by Yuan et al. [20]. Shifeng et al. [21] developed a variable leaning rate composed of two adaptive separation systems, while Gao et al. [22] designed a performance-index-based EASI method for direct sequence code division multiple access (DS-CDMA) systems. Chambers et al. presented a method for dealing with abrupt changes in the mixing matrix [23], and an adaptive algorithm that can separate noisy time varying mixtures has been reported by Enescu et al. [24]. DeYoung et al. [25] applied BSS techniques to mixtures of digital communication signals in which the sources are mobile or the environment is changing, and the mixing matrix will vary with time. Their results indicate that the main difficulty in the separation phase is the ill-conditioned nature of the channel matrix. Chen et al. [26] used a retrospective online EASI method to deal with the problem of sudden changes in the time-varying environment. A time-varying mixing matrix with stationary source signals was investigated by Bulek et al. [27]. In an earlier article, we proposed two algorithms (recursive least-squares (RLS) and Recursive-EASI) [28] to solve the problem of time-varying source signals and time-varying systems simultaneously.

However, all of the above research work did not describe the adaptive BSS problem in detail. Furthermore, these previous methods focus on algorithm performance and do not evaluate the separation results. In this paper, we formally describe the adaptive BSS problem and propose the use of a variable learning rate EASI-based method to solve the problem of nonstationary source signals in a different slowly time-varying environment. Compared with our earlier algorithms [28], the variable learning rate EASI method described in this paper is totally different (R-EASI selects a reference point, and the method proposed in this paper uses a variable learning rate), and we present a detailed theoretical derivation. In the simulation section, we describe the parameter settings, evaluation index, and simulation analysis.

The primary contributions of this paper can be summarized as follows:

1) The problem of online real-time BSS under a time-varying system and nonstationary source signals is formally expressed and compared with the traditional BSS problem. The differences between the two problem types are explained in detail.

2) This paper applies a variable learning rate EASI algorithm for the problem of adaptive BSS for a mixing matrix that varies slowly with time and a nonstationary environment. In addition, the scope and conditions of the method are identified.

3) The similarity coefficient and Vestigial Quadratic Mismatch (VQM) are used as quantitative evaluation indexes of the waveform similarity of the separated source signals.

4) We design a simulation to verify the correctness of our method. The fixed learning rate EASI and RLS algorithms are used for comparison.

The remainder of this article is arranged as follows. Section 2 introduces the model and the concept of adaptive BSS. Section 3 describes the process of our EASI method in detail. Section 4 presents the simulation verification procedure and results. Finally, we state the conclusions to this study and ideas for future research in Section 5.

2. Adaptive BSS model for linear instantaneous mixing systems and nonstationary source signals

2.1. Description of adaptive BSS

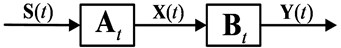

In traditional BSS, the mixing matrix is constant. However, in reality, the way in which signals mix varies with time. We call the linear instantaneous mixing matrix . The model can be described as [25, 27, 28]:

where denotes the observed signals, , and denotes the observation noise. represents the nonstationary source signals. Without considering any observation noise:

In our setting, the separation matrix is unknown but should vary with time, and the separated multiple nonstationary independent signals can be got by:

and the separated signals latest value . We hope that the and are as similar as possible. Fig. 1 illustrates the adaptive BSS model for linear instantaneous mixing systems and nonstationary source signals.

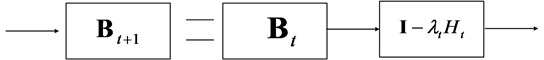

The separating matrix is constructed using either a one-stage or two-stage separation system [16, 29]. In the one-stage method, is obtained directly by minimizing/maximizing some contrast function. In this paper, we focus on the two-stage approach, in which the observations are first preprocessed by an × whitening matrix , and then an orthogonal matrix is used to separate the source signals. Finally, we obtain the total separating matrix . Fig. 2 illustrates this process.

Fig. 1Adaptive BSS model for linear instantaneous time-varying mixing systems and nonstationary source signals

Fig. 2Two-stage separation adaptive BSS

2.2. Model assumptions of adaptive BSS

BSS problems have multiple solutions [28]. To identify the optimal solution, five basic assumptions are proposed, which are slightly different from those used in traditional BSS [14]:

1) satisfies .

2) is a nonstationary random process.

3) is statistically independent at each time, and at most one component in obeys a Gaussian distribution.

4) The number of observed signals is greater than or equal to the number of source signals .

5) is linearly mixed and the system is slowly time-varying.

2.3. Comparison of adaptive BSS and traditional BSS

Table 1 summarizes the differences between adaptive BSS and traditional BSS in terms of the source signals and mixing matrix.

Table 1The differences between the adaptive BSS and traditional BSS

Problem | Source signal | Mixing matrix | Processing requirement |

Tradition BSS | Stationary | Time invariant | Offline and batch processing |

Adaptive BSS | Non-stationary | Time-varying | Online and real time |

3. Theoretical derivation of linear time-varying mixed BSS using EASI

3.1. Notion of equivariance

The EASI algorithm was first proposed by Cardoso, who used the notion of equivariance to prove that the performance of EASI is independent of the mixing matrix [29] when the mixing matrix is constant. In the same way, a blind estimate of is simply a function of . This can be expressed as:

where is the estimator of and represents this functional relationship. In equivariance theory, when the data conversion is equivalent to some parameter conversion, both and are multiplied on the left by a matrix . In this case, is equivariant when it satisfies:

Eq. (6) shows that is given by , which means that it is only related to and is independent of : this is also called uniform performance.

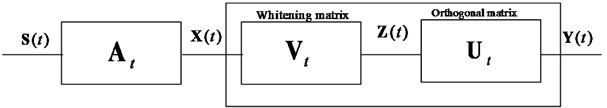

3.2. Serial matrix updating

The core concept of EASI is serial updating [16]. Serial updating involves choosing a × matrix-valued function , which is used to update the separating matrix according to:

where is the learning rate and is fixed in traditional EASI algorithm. Fig. 3 illustrates this update process of separation matrix. The global system can be similarly updated by:

Eq. (8) also shows that the overall system is not dependent on .

Fig. 3Serial update of a matrix

3.3. EASI algorithm

Using the notion of ‘relative gradient’ [16], the function in Eq. (7) can be considered as:

Using the notion of ‘relative gradient’ [16], the function in Eq. (7) can be considered as:

In Eq. (9), is an arbitrary differentiable function. Therefore, can be updated according to:

Let be the unit matrix. Exactly as in Eq. (10), according to [16], the serial whitening matrix can be updated by:

where and is the signal after whitening. The orthogonal matrix can also be updated by:

The purpose of Eq. (11) and Eq. (12) is to obtain the updated . Then, according to Eq. (11), Eq. (12), and , we have:

In this equation, 0, and so for , and are independent of each other. For arbitrary nonlinear functions , we define:

The EASI algorithm for adaptive source separation is based on Eq. (7) and Eq. (15):

To maintain uniform performance, which means that can take any values, we adjust to preserve stability. Eq. (17) describes the normalized form [16]:

3.4. Advantages of EASI algorithm

EASI is a gradient-based algorithm that uses nonlinear decorrelation to estimate the independent components. It has several advantages over other ICA algorithms [30].

1) EASI is an adaptive and online algorithm, which makes it suitable for problems in which the underlying distributions of input characteristics vary over time.

2) EASI is equivariant, and the convergence speeds, interference suppression levels, and other properties are only related to the signals’ normalized distributions and are independent of the mixing matrix.

3) EASI offers improved parallelism by combining whitening with separation, because other methods whiten the input features during a preprocessing step.

4) EASI is computationally efficient, as its basic operations only require addition and multiplication.

3.5. Variable learning rate EASI algorithm

In the EASI algorithm, the learning rate is closely related to the convergence speed and steady-state error. When the learning rate is fixed, values that are too large will make the algorithm unstable, whereas values that are too small will increase the convergence time [17].

Given the limitations of a fixed learning rate, we use a typical time-descending learning rate given by [31, 32]:

where , , and are constants.

3.6. Scope and conditions of variable learning rate EASI algorithm

1) The sources are statistically independent.

2) The source signals are non-stationary and the mixing matrix represents slow instantaneous linear mixing.

3) The number of observed signals is greater than or equal to the number of source signals, and the number of signals cannot be changed.

4. Simulation verification

4.1. Simulation dataset and parameter settings

We design simulations to demonstrate the performance of our variable learning rate EASI-based adaptive BSS method. We choose three different signals, a nonstationary wave , square wave , and a triangular wave . These are specified as follows:

, , and are independent of each other. For , which is not periodic, we calculate the expectation at intervals [1, 400 s] and [201, 600 s] to be 0.0118 and 0.0082 and the mean square root to be 1.4982 and 1.0013, respectively. The expectation and mean square root change with time. Therefore, has the property of non-stationarity. The linear instantaneous mixing matrix is slowly time-varying. We use MATLAB’s “rand” function to randomly generate as follows:

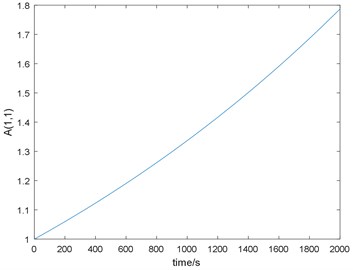

Then, we allow the first element of to vary slowly:

Therefore, we have designed nonstationary sources and a slowly time-varying mixing matrix. The nonlinear functions are related to the signal distributions. When is a sup-Gaussian signal, the choice may be . When is a super-Gaussian signal, our choice is usually [17]. In this paper, . The sampling length 2000 s.

For comparison, we designed a fixed learning rate EASI, RLS method, and variable learning rate EASI. For the fixed learning rate algorithm, after a lot of experiments, we set the EASI learning rate to 0.0017 and the RLS learning rate to 0.982. For the variable learning rate parameters, we set 0.014, 200, and 0.025.

4.2. Source separation evaluation index

4.2.1. Similarity coefficient

We wish to consider the similarity, and completely eliminate the uncertainty of the order and amplitude of the output components. Most existing algorithms use the performance index (PI) as an evaluation metric, representing the closeness between and the mixing matrix [33]. The similarity coefficient between the th separated signal source after normalization and the th real random fault source is given by:

when the only difference in and is in their amplitudes, 1. When is independent of , 0. Therefore, we expect to achieve values close to 1. The similarity coefficient between the th separated signal source and the th separated signal source after normalization is defined as:

when is independent of , 0. We therefore hope to achieve values close to 0.

4.2.2. Vestigial quadratic mismatch

The Vestigial Quadratic Mismatch (VQM) between the separated signals and the real random fault source can be used as a performance index [34]. This metric is calculated as:

where:

when the value of VQM is less than −23 dB, the effect of adaptive BSS can be considered to be very good.

4.3. Simulation results

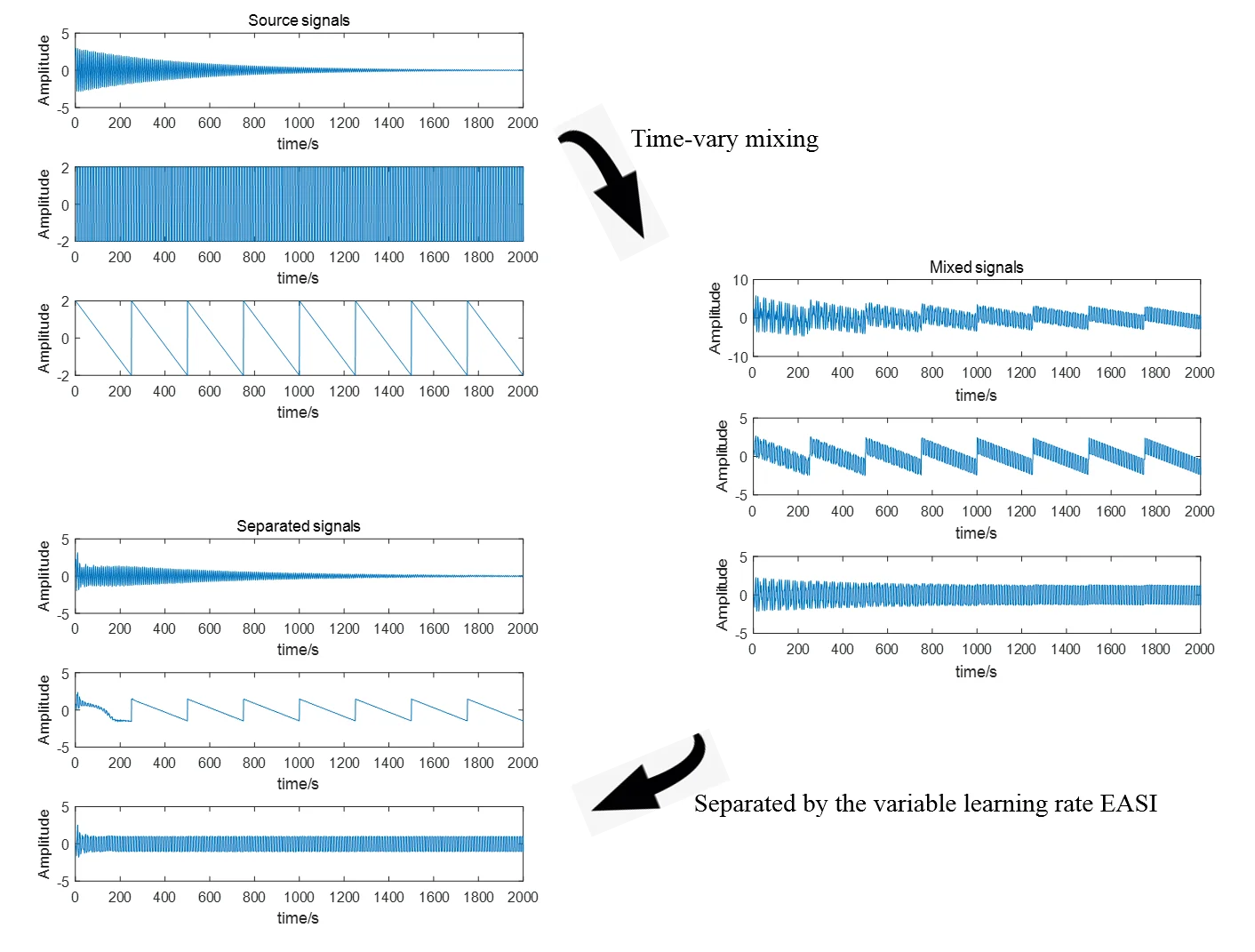

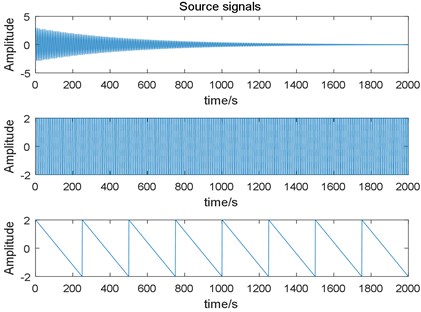

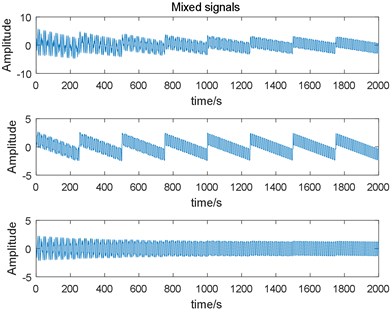

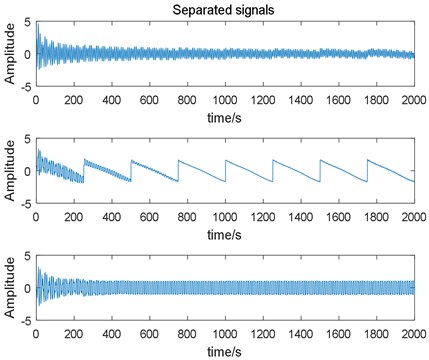

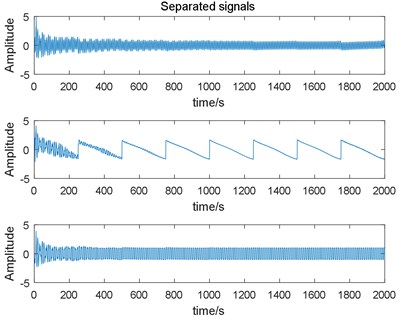

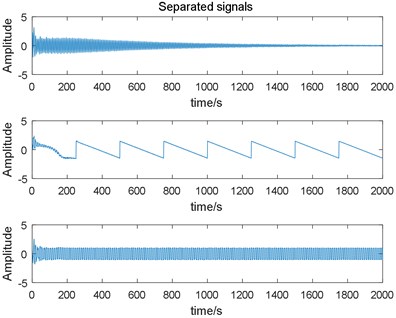

The source signals used in this simulation are shown in Fig. 4. From top to bottom, they are a non-stationary wave , a square wave , and a triangular wave . After linear instantaneous mixing, the observed signals are shown in Fig. 5. Figs. 6-9 show the separation results of the fixed learning rate EASI, the fixed learning rate RLS, and the variable learning rate EASI method.

Fig. 4Three different nonstationary source signals

Fig. 5Signals after linear mixing

Fig. 6Estimated signals output by the fixed learning rate EASI algorithm

Fig. 7Estimated signals output by the fixed learning rate RLS algorithm

Fig. 8Estimated signals output by the variable learning rate EASI algorithm

Table 2 presents the separation results achieved by EASI, RLS, and variable learning rate EASI. Table 3 demonstrates the independence of the source signals. Table 4 lists the similarity between the separated signals given by EASI, RLS, and variable learning rate EASI.

Fig. 9 shows the variation of the first element of the mixing matrix over time.

Table 2Separation results of three methods

Method | Corresponding relationship | Similarity coefficient | VQM |

EASI | responding to | 0.8233 | –3.2300 |

responding to | 0.9446 | –9.0979 | |

responding to | 0.9799 | –13.8283 | |

RLS | responding to | 0.8066 | –2.6945 |

responding to | 0.9446 | –9.0652 | |

responding to | 0.9863 | –15.5217 | |

Variable learning rate EASI | responding to | 0.9575 | –10.4256 |

responding to | 0.9845 | –14.8274 | |

responding to | 0.9911 | –21.5032 |

Table 3Similarity and independence between source signals

Corresponding relation between source signals | Similarity coefficient between source signals | -value of Chi-square test |

responding to | 0.0017 | 0.4895 |

responding to | –0.0027 | 0.4012 |

responding to | 0.0013 | 1 |

Table 4Similarity between separation signals

Method | Corresponding relationship | Similarity coefficient |

EASI | responding to | 0.2383 |

responding to | 0.3525 | |

responding to | 0.1662 | |

RLS | responding to | 0.0982 |

responding to | 0.2144 | |

responding to | 0.1557 | |

Variable learning rate EASI | responding to | 0.0531 |

responding to | 0.0172 | |

responding to | 0.0447 |

Fig. 9The variation of A1(1,1)

4.4. Simulation results analysis

The simulation results can be analyzed as follows:

1) The first separation signal in Fig. 6 corresponds to the first source signal in Fig. 4, the second separation signal corresponds to the third source signal , and corresponds to . The sequence of the separated signals is uncertain, which is a problem with the adaptive BSS algorithm.

2) From Fig. 4, we can see that is nonstationary. In Table 3, if the -value is close to 0, the source signals are not independent. Therefore, the sources are nonstationary and independent, and can be used to verity the algorithm.

3) The shapes of the identified signals are similar to those of the source signals (see Fig. 4 and Figs. 6-8) in the different methods. This result is confirmed by Table 2. The fixed learning rate EASI and RLS are not as good as variable learning rate EASI in the case of nonstationary environments and time-varying mixing.

4) In Table 4, the similarity coefficient between separated signals is less than 6 % in variable learning rate EASI, demonstrating that the correlation between the separated signals is not high and the separation is very good.

5) Fig. 9 illustrates the exponential increase of . Though was chosen at random, the selection of the initial value has a significant influence on the simulation results. The reason for this requires further study.

6) In Tables 2 and 4, the variable learning rate has a significant impact on the results. The variable learning rate EASI achieves better results than the other algorithms, and RLS outperforms EASI. The separation signals obtained by all methods are similar to the source signals, and the correlation between the separated signals is not high.

7) Similarity coefficients and VQM can both be used to evaluate the separation results. In Table 2, when the similarity coefficients are the same, VQM can still distinguish the results.

5. Conclusions

In this paper, we have described a variable learning rate EASI algorithm for adaptive BSS with a nonstationary source and slowly time-varying environment. This algorithm achieves good accuracy in terms of source separation.

However, the accuracy of the algorithm is closely related to the choice of parameters. The current learning rate and initial value are selected in advance based on experience, rather than using the degree of non-stationarity of the system response signal. In future work, we will identify more complex time-varying situations, such as changing the number of sources or the mixing matrix dimensions, explore the effects of parameter values on the results, and present experimental verifications of actual scenarios.

References

-

Chen J., Patton R. J. Robust Model-Based Fault Diagnosis for Dynamic Systems. Springer Science and Business Media, 2012.

-

Wang H., Ji X., Wang X., et al. Fault feature extraction of fan bearing based on improved mathematical morphological unsampled wavelet. Chinese Automation Congress, 2017, p. 3188-3192.

-

Li Z., Yan X., Tian Z., Yuan C., Peng Z., Li L. Blind vibration component separation and nonlinear feature extraction applied to the nonstationary vibration signals for the gearbox multi-fault diagnosis. Measurement, Vol. 46, Issue 1, 2013, p. 259-271.

-

Sayoud A., Djendi M., Medahi S., et al. A dual fast NLMS adaptive filtering algorithm for blind speech quality enhancement. Applied Acoustics, Vol. 135, 2018, p. 101-110.

-

Takada H., Ogawa T., Matsumoto H. Blind signal separation for heart sound and lung sound from auscultatory sound based on the high order statistics. International Symposium on Intelligent Signal Processing and Communication Systems (ISPACS), 2017, p. 201-205.

-

Cheng W., He Z. J., Zhang Z. S. A comprehensive study of vibration signals for a thin shell structure using enhanced independent component analysis and experimental validation. Journal of Vibration and Acoustics, Vol. 136, Issue 4, 2014, p. 041011.

-

Hasan A. M., Melli A., Wahid K. A., et al. Denoising low-dose CT images using multi-frame blind source separation and block matching filter. IEEE Transactions on Radiation and Plasma Medical Sciences, Vol. 2, Issue 4, 2018, p. 279-287.

-

Katoozian D., Faradji F. Singer’s voice elimination from stereophonic pop music using ICA. 3rd Iranian Conference on Intelligent Systems and Signal Processing (ICSPIS), 2017, p. 174-177.

-

Cheng W., Jianying W., Bineng Z., et al. Negentropy and gradient iteration based fast independent component analysis for multiple random fault sources blind identification and separation. International Journal of Applied Electromagnetics and Mechanics, Vol. 52, Issues 1-2, 2016, p. 711-719.

-

Qiu L., Liu B., Yuan S., et al. Impact imaging of aircraft composite structure based on a model-independent spatial-wavenumber filter. Ultrasonics, Vol. 64, 2016, p. 10-24.

-

Zhong Y., Yuan S., Qiu L. Multi-impact source localisation on aircraft composite structure using uniform linear PZT sensors array. Structure and Infrastructure Engineering, Vol. 11, Issue 3, 2015, p. 310-320.

-

Alexander Y. Learning methods for mechanical vibration analysis and health monitoring. Ph.D. Thesis, Delft University of Technology, 1998.

-

Popescu T. D., Manolescu M. Blind source separation-a tool for multivariate time series forecasting. The 9th IEEE International Conference on Modelling, Identification and Control (ICMIC). 2017, p. 10-12.

-

Amini F., Ghasemi V. Adaptive modal identification of structures with equivariant adaptive separation via independence approach. Journal of Sound and Vibration, Vol. 413, 2018, p. 66-78.

-

Jutten C., Herault J. Blind separation of sources, part I: an adaptive algorithm based on neuromimetic architecture. Signal Process, Vol. 24, Issue 1, 1991, p. 1-10.

-

Cardoso J. F., Donoho D. L. Equivariant adaptive source separation. IEEE Transactions on Signal Processing, Vol. 44, Issue 12, 1996, p. 3017-3030.

-

Xu J., Shen Y., Su Q., et al. A fast online separation algorithm for convolutive mixture model in WSDM. 5th International Conference on Computer Science and Network Technology (ICCSNT), 2016, p. 720-724.

-

Xie X., Shi Q., Wu R. A new variable step-size equivariant adaptive source separation algorithm. Asia-Pacific Conference on Communications, 2007, p. 479-482.

-

Zhang T., Li L., Zhang G., et al. Use estimation of Performance Index to realize adaptive blind source separation. 4th International Congress on Image and Signal Processing (CISP), Vol. 5, 2011, p. 2322-2326.

-

Yuan L., Wang W., Chambers J. A. Variable step-size sign natural gradient algorithm for sequential blind source separation. IEEE Signal Processing Letters, Vol. 12, Issue 8, 2005, p. 589-592.

-

Shifeng O., Ying G., Gang J., et al. Variable step size algorithm for blind source separation using a combination of two adaptive separation systems. 5th International Conference on Natural Computation, Vol. 3, 2009, p. 649-652.

-

Gao L., Zhang T., He D., et al. A variable step-size EASI algorithm based on PI for DS-CDMA system blind estimation. 5th International Congress on Image and Signal Processing (CISP), 2012.

-

Chambers J. A., Jafari M. G., McLaughlin S. Variable step-size EASI algorithm for sequential blind source separation. Electronics Letters, Vol. 40, Issue 6, 2004, p. 393-394.

-

Enescu M., Koivunen V. Tracking time-varying mixing system in blind separation. Proceedings of the 2000 IEEE Sensor Array and Multichannel Signal Processing Workshop. SAM 2000 (Cat. No.00EX410), 2000.

-

Deyoung M. R., Evans B. L. Blind source separation with a time-varying mixing matrix. Conference Record of the Forty-First Asilomar Conference on Signals, Systems and Computers, 2007.

-

Chen H. P., Zhang H., Zhang J. Retrospective on-line EASI blind source separation algorithm. Journal of Signal Processing, Vol. 4, 2013, p. 24-31.

-

Bulek S., Erdol N. Block adaptive ICA with a time varying mixing matrix. Digital Signal Processing Workshop and 5th IEEE Signal Processing Education Workshop, 2009.

-

Wang C., Hu Y., Zhan W., et al. Multiple random fault sources adaptive blind separation in situation of time-varying source signals and system. Vibroengineering Procedia, Vol. 14, 2017, p. 82-86.

-

Zhu X. L., Zhang X. D. Adaptive RLS algorithm for blind source separation using a natural gradient. IEEE Signal Processing Letters, Vol. 9, Issue 12, 2002, p. 432-435.

-

Nazemi M., Nazarian S., Pedram M. High-performance FPGA implementation of equivariant adaptive separation via independence algorithm for independent component analysis. IEEE 28th International Conference on Application-specific Systems, Architectures and Processors (ASAP), 2017.

-

Zhu X., Zhang X., Ye J. Natural gradient-based recursive least-squares algorithm for adaptive blind source separation. Science in China, Vol. 47, Issue 1, 2004, p. 55-65.

-

Yang H. H. Serial updating rule for blind separation derived from the method of scoring. IEEE Transactions on Signal Processing, Vol. 47, Issue 8, 1999, p. 2279-2285.

-

Cichocki A., Orsier B., Back A., et al. On-line adaptive algorithms in non-stationary environments using a modified conjugate gradient approach. Neural Networks for Signal Processing VII, Proceedings of the 1997 IEEE Signal Processing Society Workshop, 1997, p. 316-325.

-

Gelle G., Colas M., Delaunay G. Blind sources separation applied to rotating machines monitoring by acoustical and vibrations analysis. Mechanical Systems and Signal Processing, Vol. 14, Issue 3, 2000, p. 427-442.

Cited by

About this article

This work was supported by the National Natural Science Foundation of China (Grant Nos. 51305142, 51305143), the General Financial Grant from the China Postdoctoral Science Foundation (Grant No. 2014M552429) and the Project of Young Teacher Education Research of Education Department of Fujian Province of China (Grant No. JAT170038). We thank Stuart Jenkinson, Ph.D., from Liwen Bianji, Edanz Group China (www.liwenbianji.cn/ac), for editing the English text of a draft of this manuscript.